Pilot Study: Mobile Phone Meta Data Records – Introduction to the research method

Accompanying the technical report “Estimating Hourly Population Flows”About this publication

Anonymised aggregated mobile phone meta data may serve as a basis for indirect estimates of the number of residents that are present in their municipality and the number of visitors to this municipality at a particular time. This is the conclusion that can be drawn from the pilot study.

1. Introduction

Anonymised aggregated mobile phone meta data may serve as a basis for indirect estimates of the number of residents that are present in their municipality and the number of visitors to this municipality at a particular time. This is the conclusion that can be drawn from the pilot study. These estimates can also be used in official statistics. This “present population” is also called day population oror daytime population is based on registered residents who spend most nights in that municipality.

By Eurostat as well, mobile phone call data are now considered to be a vital source for the compilation and enhancement of official statistics on tourism, economy, environment, crowd flows and mobility. This is also what has spurred Statistics Netherlands (CBS) to research the use of aggregated anonymised mobile call data for statistics since 2009. CBS is no exception in this regard. Around the world, organisations such as universities, national statistical offices, but also commercial organisations are developing methods and software for the analysis and processing of telephony data for the purpose of statistics, science and policy development.

Raw and insufficiently aggregated mobile network data or traffic data are extremely privacy sensitive. During setting the processing method, not only existing legislation requiring the data to be anonymised was taken into account. A series of specific risks were taken into consideration in advance and preconditions have been drawn up for the research to be carried out. These are discussed in chapters 3 and 4. They describe how statistical security measures have been integrated into the design of the method prior to the research. Applying only one measure is not sufficient. Therefore, a combination of several measures as a whole provides the statistical security to ensure privacy.

This paper is an introduction to the technical report [1]. The report describes the mathematical methods that are applied at the Mobile Network Operator (MNO). As a statistical institute, CBS safeguards independence, continuity (guarantee of delivery), international comparability, quality frameworks and transparency of the algorithms. In other words, the algorithms and methods on which the statistics are based must be 100% explainable and transparent. The reports of the study are therefore also made public. This pilot study was completed in 2019.

This paper is made up of several chapters. Chapter 2 briefly discusses the basic principles of the method and roughly describes the processing process. In chapter 3 all the steps of the process are described in detail. Finally, chapter 4 discusses the privacy aspects of the method on the basis of a number of international publications that refer to individual disclosures from aggregated datasets with origin-destination information.

2. Fundamentals of the process

The procedure of processing signalling data into statistical information consists of 9 steps which are explained in detail in chapter 3. It concerns automated processing which ultimately results in aggregated anonymised data.

The source data is not delivered directly to CBS as is customary in other statistical processes within CBS. Instead, the data has already been anonymised and aggregated at the MNO. Therefore, CBS does not receive any traceable traffic data but only anonymised and aggregated information from this data.

Figure 2.1 shows the software modules associated with the 9-step process. These were designed in such a way as to adhere as closely as possible to international standards currently being developed worldwide. Chapter 3 refers to these modules.

3. Method description in 9 steps

3.1. Processing steps taking place at the MNO

3.1.1 Step 1 Description of source data already available at the MNO and pseudonymisation

Source data

The source data according to this method are signalling data, i.e. data regarding events that take place between the device and the network and are recorded in the datacentre of the MNO. The signalling data are generated by mobile phones connecting with the mobile phone network, for example because of a phone call, a data connection being started, or a text message being received. This is registered at the switchboard and stored for up to 6 months. It is not the content of the communication but the time, duration and cell to which that particular event is assigned. A cell is part of an antenna; an antenna covers an area consisting of one or more cells.

There are various types of event-based source data, depending on which events are stored. Besides signalling data, these include for example Event Data Records (EDR) and Call Detail Records (CDR). Signalling data contains the most events. These data are often generated separately for 2G, 3G, 4G, and 5G networks. The latter is irrelevant under this method. The point is that sufficient data points of this type (i.e. events that are recorded) are available per device. The more events are available, the better this is for the estimates. Table 3.1 has been borrowed from publication of Positium [2] and shows the differences in numbers of events between different types of data.

From the signalling data, only a double pseudonymised ID, the exact time and the antenna ID (number of the antenna used) of each active connection are generated as source dataThen, static reference data such as the cell plan (where the antennas are located) are added.

Incidentally, the method can also handle Timing Advance data as a special form of an event, but this falls explicitly outside the scope of the pilot. Special location determination techniques such as those offered by network providers for 4G and 5G networks also fall outside the scope of this project (and are therefore not used).

Storage and pseudonymisation of source data

The method begins with the processing and storage of signalling data relating to subscribers and users of the MNO's communications services. This is an existing process at the MNO. All subsequent steps are derived from it. As mentioned above, the legal retention period of the signalling data is 6 months.

Signalling data are pseudonymised and provided with an identification number. This is an existing internal security procedure. It is a common internal action in data processing that is also used by other data processors in sectors other than telephony to prevent direct traceability of individuals within an organisation. This pseudonymisation ensures that all directly traceable information is extracted from the data (telephone number, IMSI, etc.). The employees of the telecom company have signed for confidentiality and are not allowed to engage in any activities that lead to disclosure.

3.1.2 Step 2 Second pseudonymisation

As a second step, the MNO pseudonymises the signalling data again, i.e. the keys are changed constantly and cannot be stored. This process is repeated every 30 days for all pseudonymised numbers. Among other things, this counteracts disclosure (by researchers themselves within the company) of groups of people (often VIPS with security) whose agendas are publicly known. Much more important is that this process is irreversible. The employees of the telecom company who process the data cannot go back to the original data.

Further on in the process, considerably more privacy safeguards are built in. Nevertheless, also in this process step, the risks of traceability of the data within the MNO have already been considered. The risks of traceability of the simulated data by the researchers themselves have been examined. We see two risks. The first risk consists of cracking the key. The second is the risk of disclosure on the basis of pseudonymised signalling data, as indicated in the recommendation of the Article 29 Working Party (hereinafter WP29).

Cracking the key is difficult and takes a huge amount of computing power. As indicated in the European WP29 advice, the tracing of individuals by estimating their location based on signalling data has become extremely difficult due to the second pseudonymisation, in which no key is produced. The literature mentions that disclosure is easily achieved if a few of the person’s locations are known. However, that theory does not apply to this particular practice.

Suppose that someone makes a deliberate illegal attempt to disclose individual (telephony) data based on estimated geodata from signalling data.

First, sorting has to take place from a dataset that contains 40 to 200 billion records per month. This is extremely difficult, after all, the IMSI or other variable is unknown.

Sorting based on pseudonymised data from within the telecom company requires collecting extra information on someone’s agenda with at least 4 accurate locations per day, plus the associated time of day. A large part of the route has to be known in advance in order to enable tracing. It is not enough to merely know someone’s work location and place of residence. A total of 4 locations is needed in order to achieve a 95% success rate.

Then, the known elements (places and times) must be translated into a route of cells and antennas that have to retrieved from the source dataset. There will be false positive matches that lead to the wrong antenna, and so to the wrong corresponding encrypted and as such pseudonymised IMSI. This results in quite a labour-intensive process. It will also require writing of extra software. The above is not possible with the existing process algorithms. “Route information” simply is not included anywhere in the process.

This implies that an illegal automated process would need to be devised within the walls of the telecom provider in order to establish the route that is taken by an individual. That route will not contain exact locations such as those enabled by GPS data but areas that may vary per location. This exercise is many times more complex and arduous than if it were taking place in a “regular” dataset such as that of a survey. We estimate that any attempt to trace such information would take at least 4-5 days’ work plus an excessive amount of computing power that is generated from the existing IT facilities. Very few employees at the telecom company have access to the original signaling data. All of them have signed a confidentiality agreement. Then, a conspiracy would need to be formed between said employees, the data analysts at the company and the employees in charge of IT security.

After all, there are security checks in place within the IT environment of the telecom provider that will identify any excessive use of computing power and there is logging of the actions performed by employees. Although the inherent residual risk of deliberate re-identification is by no means zero, it is many times harder to achieve than with a regular dataset such as information at the tax authorities or in medical file systems. The trouble involved in active disclosure combined with the security precautions effectively result in an extremely remote risk. Tracing of information using other more traditional datasets would be achieved more easily, mainly because of the matches with unique variables such as age or sex, which are completely absent in this case.

3.1.3 Step 3 Producing location estimates (MobLoc module)

In the section MobLoc, the location is estimated. That location is estimated with the help of a Bayesian model, using the coverage area of a cell. In order to arrive at the most reliable aggregated estimates, the devices are redistributed and disseminated. This means no exact location is defined such as with GPS data, but an estimate that takes into account the probabilities . The aim of this step is to provide estimates of the number of devices per municipality, based on the signalling data. This involves the use of the antenna map (reference data).In addition, publicly available information may be proposed by Statistics Netherlands to enhance results, such as land use and elevation maps. This concerns static information.

The algorithm used for location estimation works as follows: the land is divided into grids measuring 100x100 metres. These are grids that (similar to pixels) are easily translated into the fluid borders of municipalities. In cases where a device connects to a cell, the probability of the device being located in a particular grid is estimated for all grids located nearby. This involves the use of data from the antenna map, such as location of the antennas / cells as well as the physical properties such as height and direction angle. These probabilities are multiplied by prior probabilities. These are standardised estimates of the number of devices per grid produced on the basis of auxiliary information, such as the degree of urbanisation. The estimated probabilities are called posterior probabilities.

The cell IDs from the data are linked to these posterior probabilities, so that an estimate is made of the number of devices per municipality. The location is therefore not very precise: statistical noise is added because of this this step. An additional effect of this method is that numbers of devices are "scattered" in the space and thus redistributed. There is dispersion over time as well. The model works in one-hour batches. If there is only one measurement in a specific hour, this data is extrapolated over other minutes within that hour. There is no spread between the hours as a unit of time. The dispersion in space and time causes an extra noise that changes composition every hour. This means that the relationship between the counts per area and the counts per mast is a system of linear equations, with fewer equations than unknowns and therefore not uniquely invertible.

We illustrate this with an example. Suppose we obtain the respective probabilities of 0.5, 0.3 and 0.2 for a device number one in the hour 8:00-9:00 across municipalities A, B and C. It means we consider the probability greater that the device was located in municipality A, but leave this probability distribution as it is - we do not definitively assign it to A. Suppose that for another device 2 in the same hour we estimate a probability distribution for municipalities A, B, and C to be 0, 1, and 0. In other words, we suspect that the device was located in municipality B for the entire hour. Then we add up the probability distributions for both devices: 0.5, 1.3 and 0.2. The resulting estimate is that there were 1.3 devices in municipality B.

3.3.1.4 Step 4 Adding the place of residence (module MobProp(erties))

In this step, the municipality of residence is estimated. In this pilot, an indication for the place of residence is that the mobile device is most frequently located in the vicinity of a certain antenna. Again, this is not a pinpoint and uses information from Mobloc, an estimate of the location. Finally, the "residential population" counts are estimated. Each mobile device is assigned to a so-called residential antenna. This is the antenna to which the device has been connected most of the time during the observation period. The mass of the device is then distributed over the municipalities in the range of the residential mast. In general, therefore, the device is assigned to more than oneresidential municipality - it is a probability distribution over one or more municipalities.

3.1.5 Step 5 Automatic data processing and thickening (MobCube module)

The dataset is condensed by making a selection of an area and a time period and processing it in MobCube. This last step is irreversible because a lot of information is destroyed (80-90%). After this step there are aggregated counts left and there are no longer any individual records. Basically, one estimated device is equivalent to one aggregated calculation.

The data from MobLoc and MobProp are both processed automatically and aggregated into statistical information. This is actually a count. An example of such a count is described in figure 3.1. If a person resides in the municipality of Maastricht and is in the municipality Utrecht at 12:00 PM, then this is counted in the table cell (column Residence) Maastricht and (row) Utrecht. If the same person is in the municipality of Eindhoven at 11:00 AM, they will be counted in the cell Maastricht (Residence column) and the cell Eindhoven (row). There is no link between the different hour counts and therefore no route (Maastricht-Eindhoven-Utrecht) can be calculated with these results.

3.1.6 Step 6 Output check on anonymised data and transmission (MobSafe module)

After MobCube, there is virtually no traceable data left in the dataset. It is possible that some table cells contain very small numbers of counts, for example the number of people residing in a small municipality and present in another municipality that is not nearby. Therefore, in order to avoid any risk of disclosure, a definitive statistical protection in MobSafe takes place in which all output with a count of less than 15 devices is permanently removed. These table cells remain empty.

Again with this step, information is lost. The MNO eventually performs a manual check as well to see whether the process has been carried out correctly. The outcome of this process at the telecom company concerns aggregated and anonymised information that is delivered to CBS. In terms of format, this is the same table as described in Figure 3.1. However, the N>15 line once again destroyed a large part of the data. This is also the case for more than 80% of the cells in this table (see also chapter 4). These will obtain a missing value (a dot or a dash). The result of the technique applied in steps 1 through 6 is permanent. There is no possibility of deducibility due to all the measures in place. Moreover, linking with external datasets could not lead to disclosure. It is therefore impossible to trace the aggregated and anonymised information to individual personal data from this table.

The checked anonymised aggregated data is sent to Statistics Netherlands via a secure connection. The information is treated as highly business-sensitive as it provides insight into the distribution across the country and at the municipal level of subscribers of the MNO. The information is therefore encrypted before it is sent.

3.2 Further processing at CBS

3.2.1 Step 7 Correction of data (MobCal (ibration))

Corrections take place at CBS in order to arrive at representative data on the Dutch population based on the received data. After all, the objective is to say something about the population and not about mobile devices or the population registered by telecom providers. This correction takes place on the basis of registration data from the Personal Records Database (BRP) according to a weighting model. The data at the municipal level (i.e. not at the personal level) may also be enriched with other CBS data, e.g. from the vacation survey or other register information.

3.2.2 Step 8 Processing towards relative values

The weighting in step 7results in an adjusted count. The counts have not yet been validated in this pilot. This means that it is uncertain whether the numbers are the correct numbers for all municipalities. This has not yet been checked. For the visualisation in the beta product, the values are therefore translated into relative differences. This means that the numbers are translated into percentages of people originating from and destined for a municipality. For example, at 12:00 PM, 8% of all Amsterdam residents left for Almere. This is mainly related to the quality of the results, but may also be seen as an extra precaution against group disclosure with outside information. The format remains the same as described in figure 3.1, but it concerns ratios / percentages that can be read in the visualisation at a later stage (MobVis).

3.2.3 Step 9 Publication

The results have been made publicly available in a new visualisation (MobVis) that has been specially developed for this purpose.

4. Privacy

The Dutch Telecommunications Act requires the data leaving the telecom company to be anonymous. In this context, the Dutch Data Protection Authority (DPA) referred to a scientific article [2] describing a method for disclosure of trajectories of mobile phones from aggregated telephone data. This chapter discusses the method described in this article in more detail and answers the question to what degree this affects the anonymity of the data.

The starting point is the dataset that is delivered to CBS by the telecom provider. These are aggregated data containing estimated counts of the devices located in a given municipality from a given municipality of residence at a given hour (the so-called flow cube). This chapter is organised as follows:

- In section 4.1, the inverse problem is explained and how it is introduced in the process. Here it is examined to what extent it is technically mathematically possible, on the basis of the latest knowledge, despite all the precautions, to trace back directly to an individual. In this section the anonymity is mathematically demonstrated. In this context, an opinion proposed by the DPA referred to a scientific article [3] [4] from China which describes a method to trace the trajectories of mobile phones from aggregated telephone data. The method cited in the article to ''crack'' outcomes to traceable outcomes is fairly novel but also runs into this fundamental inverse problem. The article describes a complex method. It requires extensive mathematical analysis to be able to interpret it correctly. In section 4.1.3, as a supplement to the mathematical approach, these Chinese articles are also discussed in more detail and why they cannot work with the method CBS used in the pilot.

- Section 4.2 discusses the hypothetical case in which it is assumed the attacker (this can be within CBS itself, or an external hacker with access to data from, for example, a "big tech" company) has a very large amount of information (the most extreme scenario). Is it possible to determine the location of someone's device (by means of a flow cube)? This approach is described in section 4.2. It concerns an advanced attack that goes beyond the scope of the scientific article from China in section 4.1.

- Finally, the phenomenon of group disclosure is described in section 4.3. It concerns the possibility of identifying groups from the output data and what safeguards are taken to limit the risk of this as much as possible. Strictly speaking, this has nothing to do with the anonymity of the individual. On the contrary, monitoring groups is an important application for combating COVID-19. Nevertheless, it is important to pay attention to this as well.

4.1 Basic mathematical principles for anonymity according to the CBS method

4.1.1 The classic inverse problem

The basic mathematical principle applied by CBS for data security comes from the field of algebra.

"When a consistent system of independent linear equations over the real numbers has a smaller number of equations than unknowns, the solution set is infinitely large.

For example, if one adds together five numbers and publishes only the sum, it is impossible to retrieve the original numbers. This principle is the main reason why the data provided by the telecom providers are anonymous. Thus, mathematically speaking, no reverse calculations can be made, whichever method or technique is used.

The relationship between individual objects, such as mobile phones or similar electronic devices, and a measurement such as a count of those objects is a mathematical equation. The most important and irreversible procedure in every step of a processing process is therefore to deliberately not carry out certain counts. This irreversible destruction of information ensures that none of the partial steps can be reversed to lead to an individual device. This applies a fortiori to the entire process: from signalling data to the aggregated anonymised dataset provided by the telecom company to CBS.

4.1.2 The inverse problem that arises in the process

For a precise and complete description of the process steps refer to chapter 3 and the technical description [1]. In this paragraph a number of specific steps which are important in the process to arrive at anonymous telephone data will be discussed.

Step 1 to 6: Processing by the telecom company (takes place within the infrastructure of the telecom company)

Every 30 days, all pseudonymised data are re-pseudonymised at the operator without a key being generated (which can therefore not be stored). The double-pseudonymised data contains links between a mast and a device at a time of exchange of a contact signal or data. The links between mast and device are still being edited. There are two reasons for this:

- Content-related: whether a link is being established at a certain time between an antenna and a device not only depends on physical proximity but also on signal strength that is not uniform around an antenna, and on the availability of signal processing capacity by the antenna. Within a time interval of one hour, devices often exchange signals with several masts in random order. It is therefore statistically purer to weigh each of the individual mast-device links over a number of masts.

- Security-related: because of anonymity. Because links are divided over a number of antennas, and then numbers of fractional links are added per mast, the 1-to-1 relationship between an individual antenna and a device is irretrievably disrupted.

The addition per mast should always be weighted across municipalities, because the sensitivity area of each mast covers several municipalities. Therefore, there is no unique, reversible relationship between the counts per area or mast and the number of devices involved per area or mast: there are more unknowns than there are measurement data, so the mathematical basic principle applies that the unknowns can no longer be determined. Information is deliberately destroyed to prevent identification. In chapter 3, the exact operation of the location census algorithm is given as follows:

- ".... The location is therefore not very precise: statistical noise is added in this step. An additional effect of this method is that numbers of devices are "dispersed" in space and thus redistributed. Also in the time dimension there is a dispersion. The model works in batches of one hour. If there is only one measurement in a particular one hour time span, this data is extrapolated over other minutes within that hour. There is no spread between the hours as a unit of time. The dispersion in space and time causes an extra noise that changes composition every hour. This means that the relationship between the counts per area and the counts per mast is a system of linear equations, with fewer equations than unknowns and therefore not uniquely invertible.

- We illustrate this with an example. Let’s calculate the probabilities of 0.5, 0.3 and 0.2 for a device with number 1 in the hour 8:00-9:00 AM across municipalities A, B and C. So we consider the probability of device 1 being in municipality A to be greater, but leave this probability distribution as it is - we do not directly assign it to A. Suppose that for another device 2 in the same hour we estimate a probability distribution for municipalities A, B, and C to be 0, 1, and 0. In other words, we think that the device was probably completely in municipality B in that hour. Then we count the probability distribution for both devices on: 0.5, 1.3 and 0.2. So we estimate that there were 1.3 devices in municipality B."

In addition, it should be noted that the range of antennas varies greatly, from 5 metres to 60 kilometres across municipal boundaries. The angle can also vary from 2 degrees to 360 degrees. As prescribed, the telecom company applies the rule that if the counts in a certain area fall below the lower limit of 15 within a certain period of time, that data is suppressed and thus destroyed. The total number of devices per time interval in the final dataset that comes to CBS fluctuates greatly. After all, if a device has not connected to the network for a telephone call or text message in a span of one hour, there is no registration for that device for that hour, and that device is not counted in the dataset provided to CBS for that hour. The population is therefore not constant in size and certainly not in composition. The fluctuations are amplified by the suppression of small counts as described above.

It is important to mention this here because it is an essential requirement for the paper[3], for example, that the population of devices is constant in composition and size.

When these datasets arrive at CBS, several irreversible operations have been carried out that have destroyed information, so that even with the data and resources available to CBS itself, identification is no longer possible. The data received by CBS is therefore anonymous.

Steps 7-9 Statistical output

The number of different counts made (per municipality) has a complicated and non-reversible relationship with the number of devices counted. The number of unknowns is in turn greater than the number of comparisons: the counts at municipality level. The "standard" CBS statistical security for CBS publications is applied here once again. As an extra precaution, the numbers to be published have been adjusted to relative values. In this step, information is again and deliberately destroyed in order to render it impossible for an external party to even reconstruct counts per mast, let alone trace the movements of individuals.

4.1.3 Discussion of academic publications

Paragraphs 4.1.1 and 4.1.2 explained how identification is rendered impossible as it is a violation of the aforementioned basic mathematical principle. In addition to the paper [3] (quoted in the introduction), other papers [4],[5] have been published which explain techniques aimed at disclosing information about the carrier or owner of a device based on the path of that device. There is significant academic interest in this topic, so there are many more papers in this field that have been or will be published. It is not feasible to discuss all existing literature in this area individually.

However, all such methods must, in order to work, be applicable to data in a process in which the aforementioned basic mathematical principle explicitly or implicitly does not apply. In other words, direct back calculations to reveal individuals are not possible. These papers are mostly aimed at approaching the individual with a certain probability.

Most methods turn out not to be applicable to outcomes such as those produced according to the method described above. The design of the method therefore makes these cracking methods inapplicable. In part of the published papers it is assumed that there are 'tracks' available in which the successive antennas with devices are stored and processed as a whole.

The fundamental assumption for these methods is that the 1-to-1 relationship between device and mast or area is preserved. This is in violation of the method that is used by the telecom provider in step 1 (and step 3). Therefore, the conclusions of any paper referring to such tracks do not apply to the method used by CBS, of which the MobLoc module forms an essential part. In addition, it remains necessary for these types of methods to leverage additional external identifying information so that a certain track of a device can be linked to a person.

The data generated by the CBS method is of a completely different type than the data in the paper [3]. The most important assumption in the paper [1] is that in consecutive time intervals there are identical 1-on-1 devices in the dataset, where moreover each device is connected 1-on-1 to an antenna. The 1-on-1 connection of antenna and device is prevented in step 1 of the process discussed above.

It is unclear whether the procedure described in the paper can be adapted to this method. This requires additional research. In this article a relaxation for the algorithm is applied based on a number of assumptions to make the calculation possible. It is likely that a relaxation for the situation with millions of devices that should lead to an acceptable calculation time will lead to a reduction in accuracy, resulting in more "incorrect" routes being found. Even if the computing power is present, the desired accuracy leading to identification cannot be achieved.

The former paper[3] also uses tracks, but does not take them as a starting point. Instead, counts per antenna are linked in consecutive time intervals so that tracks can be constructed. Here, model assumptions are used for typical device movements (patterns). After all, many people are known to have the same patterns of movement behaviour. To solve this problem, the so-called 'Hungarian algorithm' is used. There are several assumptions inherent to the method as described in that paper, as a result of which the conclusions cannot apply to the process steps followed by Statistics Netherlands. The number of devices in the paper[3] is many times smaller than the data based on the analyses carried out in this pilot (100 thousand versus 21 million), while the number of locations for which aggregates are determined in that paper is much larger than the data (at municipal level only) that will be provided to CBS (8,600 versus 400). The difference between the number of equations to solve and the number of unknowns is therefore many times greater for the data provided to CBS than is the case in the paper. This would amount to an order of magnitude of 1·1018. This makes it impossible to process this procedure with CBS's currently available computer power. As far as we know, the procedure described in the article can only be performed on such a dataset by four supercomputers in the world (exa-scale computing).

Let's take a look at the following thought experiment belonging to the cracking method from Tu's article [3]. For the sake of convenience, we assume that a device can only be located in one municipality for one hour1). Consider the trajectories of two devices with the same home municipality, and change the two municipalities in their trajectory for a certain hour2).

In that case, the aggregated flow cubes3) before and after the exchange are identical. This simple example already shows that multiple path data sets can lead to the same flow cube after aggregation. However, it is not clear from this argument whether, and to what extent, the flow cube can contribute to the identification of individuals. It could be that some path combinations are more likely to be plausible than others. This plausibility information could add an attacker to the problem in order to make a better estimation of the original paths. So suppose an attacker has extra data (such as general mobility patterns, or data from a specific individual) next to the flow cube. To what extent is it then possible to solve parts of the equations after all?

The assumption that all devices are present in the data at all times is an idealised situation that does not occur in real datasets, such as the dataset to be provided to Statistics Netherlands. However, this is crucial for the Hungarian algorithm (an optimization method) as described in [3] to work. As soon as even one device disappears or appears in a time interval, that algorithm cannot be operationalized. In the paper[3], therefore, devices are completely omitted from the population or are interpolated. The academic method described in the paper[3] is therefore not applicable to the dataset that CBS processed in the pilot.

The paper[3] also indicates that extra information is needed to link a reconstructed trajectory to a recognizable individual. In the paper a reconstructed trajectory is "unique" if it can be linked to a unique trajectory within the "ground truth" on the basis of n top locations. It is then assumed that a unique trajectory within the "ground truth" can be linked to a unique person in the population. For this last step extra information is needed, which also needs to be uniquely linked to a recognizable person.

It is also indicated in the paper[3] that in general it can be said that every algorithm or method that can identify a unique device should assume that every step in the processing of the raw telecom data is uniquely invertible. On the contrary, the mathematical basic principle ensures that each processing step is not invertible, so disclosure is not only unlikely or very computationally intensive, but mathematically impossible. The next question is whether an attacker with additional information can still not approach the truth. In section 4.2 this is discussed in more detail by means of another extreme attack scenario.

For the sake of completeness, this paper incites several other critical points as follows[3]:

- An N>15 eliminates all unique routes that one could quickly compose based on low numbers of data.

The uniqueness of a route is not mentioned in the article, it only describes the percentage of unique routes found based on the TopK (most visited) locations “…uniqueness measures the possibility that he can uniquely distinguish victims' recovered trajectories. Therefore, we define the uniqueness as the percentage of recovered trajectories that can be uniquely distinguished by their most frequent k locations (Top-K)”. Subsequently, external information is needed to link that trajectory to an individual; Therefore, the results indicate that the recovered trajectories are very unique and vulnerable to be reidentified with little external information.”

What external information is needed is not mentioned in the article, but it is plausible that the authors mean that one knows which TopK locations belong to a person. Whether it really is the right person (is that person the only one with those TopK locations) is not investigated, because it is assumed that a person / device can be identified by a unique TopK . So a person with a TopK number of locations fits the route, but that should not be reversed to the idea that it is possible to link them to a unique person. Precisely because in the chapter 3 described methods, the areas are very large (and not cells). The question is therefore whether the creation of this link is the right one. - In the study, cells ("area covered by base station") are used as geographical boundaries. We use municipalities instead of area cells. This makes it much more difficult to link a route to a device. The article talks about 8,000 base stations. This means that there are 511,808,016,000 unique variants of a top 3 of most visited base stations possible. In our dataset with 355 municipalities there are 44,361,510 unique variants of a top 3 most visited municipalities of each person. This is a factor of approximately 11,500 lower to link a top 3 to a device. In our case, the smaller number of unique variants of a top 3 is also distributed over 2 to 4 million devices instead of 100 thousand. This makes the "uniqueness" of a top 3 combination ("being different from others" mentioned in the article) 200,000 to 400,000 times smaller.

- The "recovery accuracy" will be many times lower because not 100,000 but 4 million devices are in the dataset (see Figure 11 a for decrease accuracy with an increase in the number of devices). This makes it very likely that if a route with the correct TopK locations can be linked to a person, the route will not be correct. This does not enrich the knowledge about that person.

4.2 Attack scenario

In section 4.1 it has been shown that solving equations does not work to reveal individuals. What if someone, an attacker or hacker has additional sources of information at his disposal? What are the consequences then? Suppose the attacker knows all the locations except one during period t (of one hour). In the periods before and after, the attacker also knows for this one device where it is. The purpose of the attacker is to find out where the device was during period t. The attacker knows the location of the device in t-1 and in t+1, and given the maximum travel speed of the device, a number of areas remain in which the device may have been in the meantime. The question now is, to what extent the flow cube can help the attacker with this problem. Let's assume that there is more than one possible area at time t, otherwise the attacker will not need the flow cube anyway. That data does not reveal anything the attacker already knew.

Since the attacker knows the locations of all other devices, the location should be easy to find: he takes the numbers from the flow cube; subtracts the known numbers from it and if all goes well, there is one area left where a difference of one remains. However, there are a number of characteristics of the signalling data and the methodology used to produce the flow cube that make this calculation much more difficult to make than one would initially think. This has the following causes:

- Not all devices show activity. So although the locations of all devices can be traced on the basis of the source dataset, it is not known which of them were actually active during period t. To be sure, it is assumed that the device whose location is sought was active and that this is known to the attacker. As can be seen in Figure 4.1, approximately 20-25 percent of the devices are inactive during the day per hour. An average of 78% is active at 7:00 in the morning.

- The attacker knows where the devices are but is not sure which area the devices are assigned to. Allocation of devices to areas is done via the 3. transmission tower4). Many cell towers provide coverage in multiple areas. Using a model, for a given transmission tower, one device is divided over all areas where antenna provides coverage (the fractions add up to one). The attacker is also not aware to which cell tower antenna a device has been connected (if connected; see previous point).

For many antennas, a connection to this antenna cannot be directly translated to a municipality. So even if the attacker has data about the actual location of each device, there is uncertainty about in which municipality this device will be because of the estimation method. When a transmitter mast serves more than one municipality, a device is counted in weighted fractions in all areas where the antenna provides coverage. For example, a value of 100 devices in the data can consist of 200 times a contribution of 0.5. - For areas where less than 15 devices are estimated, the value is not present in the flow cube. So there are a lot of areas for which the calculation is not possible anyway. The number of cells that is suppressed is very time dependent. At night more table cells are affected but much less devices and vice versa.

Each of the above points makes it impossible for the attacker to determine the location of the device with a reasonable degree of certainty. Assume that the estimation is 0 (zero) devices in a area. Approximately 80% of the devices are observed. The number of observed devices will therefore follow a binomial distribution (with p = 0.8). This means that with 90% probability there may also be one device in that area. If more devices are estimated, the probability interval will only increase. If 10 devices are observed, up to 16 devices can actually be in the area. Since the uncertainty in the number of actual devices in the area is already greater than or equal to one, determining the location of one device becomes very difficult. This example is further elaborated in Appendix 1 as the known numbers of devices do provide additional information.

The effect of point 2 varies greatly per area. In urban areas there more antennas, so antennas less often cover a large area, while in rural areas the masts often cover larger areas. Also when many antennas overlap in coverage area this problem becomes more complex. However, exact figures are not available at this time. As indicated in point 3, for many areas the figures are suppressed. Nothing is known about these areas other than that the estimated number of devices is less than 15.

4.3 Group disclosure and nuance of the N> 15 rule in step 6

The output provided by the telecom company contains a table of which each cell contains more than 15 estimated devices. It is important to understand that these are not real direct counts. It is a Bayesian estimate. So even an outcome from one device is in fact not unique and therefore anonymous. If there is an identification it is purely by chance and a disclosure not certain. However, improper (but as correctly interpreted) disclosure is also undesirable; that is why a lower limit has been set. That is the main reason for this limit.

The example is a bus of students that goes between 2:00 and 3:00 a.m. from the municipality of Groningen to another municipality. If more than 15 of these students have the same provider and are all active in the same municipality in the same hour (there are events of those devices) and happen to be assigned by the geolocation algorithm in the same municipality and there is no other movement of devices with the same origin municipality Groningen to that municipality, then such a group can be recognized in the data provided to Statistics Netherlands.

That looks like disclosure, but in fact they knew they had to look for this group. Agenda information (e.g. a programme publication on the internet) was needed. So no additional information was revealed from this data source in this case, but there was a one-time recognising (or validating) of the results. It is also not possible to recognise individuals within that group. However, certain characteristics can be added to this group, such as the fact that it has travelled via another municipality.

A higher limit N would reduce the chance of group disclosure even more, but only within the SN infrastructure. The limit of N>15, though, has been carefully investigated. Efforts have been made to minimize data in respect to applications: however, the data must remain usable. Figures 4 and 5 show that further increasing of the limit in step 6 leads to outcomes that are ultimately useless. Figure 4.2 shows that the dynamics/flows of the population (green line) disappear with the increase of the border/"threshold", while this information is relevant for making statistics.

Knowing that under very rare circumstances there may be a risk of group disclosure, various safeguards have been taken to prevent this information being used to identify groups of persons in the data provided to CBS. It is prohibited by law for the MNO to provide identifiable information about individuals, and for CBS it is prohibited by law to disclose identifiable information to the public. This prohibition is also supported by organisational and technical measures. For example, access to this data is blocked at CBS and only a few employees of CBS can access these data.

2) In the scientific article trajectories are compiled from the data. The method used in the pilot cannot be used to compile routes, but it can be assumed that from the hourly counts a route can perhaps be compiled.

3) This refers to an origin destination table containing counts in time. For example, at noon in Amsterdam there are 300,000 Amsterdam residents and 500,000 people from surrounding municipalities. By putting the hours next to each other, one can observe the "flow" in the counts. The third dimension is then time, hence the name flow cube, an origin-destination matrix in time.

4) So the attacker knows the locations of all other devices, but that subtracting the flow cube numbers will result in wrong (and possibly negative) numbers. So the attacker should also know which other devices were all active.

5. Conclusion

With the aggregated information published from this method it is impossible to determine the location of individual devices with certainty. This information is therefore anonymous for an attacker, even if he has a lot of additional information at his disposal.

References

[2] Positium.

[3] Tu, Z., Xu, F., Li, Y., Zhang, P., Jin, D. (2018), “A new privacy breach : user trajectory recovery from aggregated mobility data”, IEEE/ACM Trans. on Networking, 26, 3, 1446-1459.

[4] Zang, H. Bolot, J. (2011), “Anonymization of location data does not work: A large-scale measurement study”, In Proceedings of the 17th annual international conference on Mobile computing and networking, 145-156.

[5] De Montjoye, Y.A., Hidalgo, C.A., Verleysen, M., Blondel, V.D. (2013), “Unique in the crowd: The privacy bounds of human mobility”, Nature Scientific reports, 3, p.1376-1381.

Appendix

Further elaboration of point 1: not all devices are active every hour

The attacker wants to determine the region x of the device i.

The area is known for all devices except i.

- Of all devices except i, the region is known.

- We know of device i that it is either in area A or in area B because we have the location of i in t-1 and t + 1. So we limit ourselves here to two possible areas. More possible areas only make the location more uncertain.

- We observe a fraction of the devices.

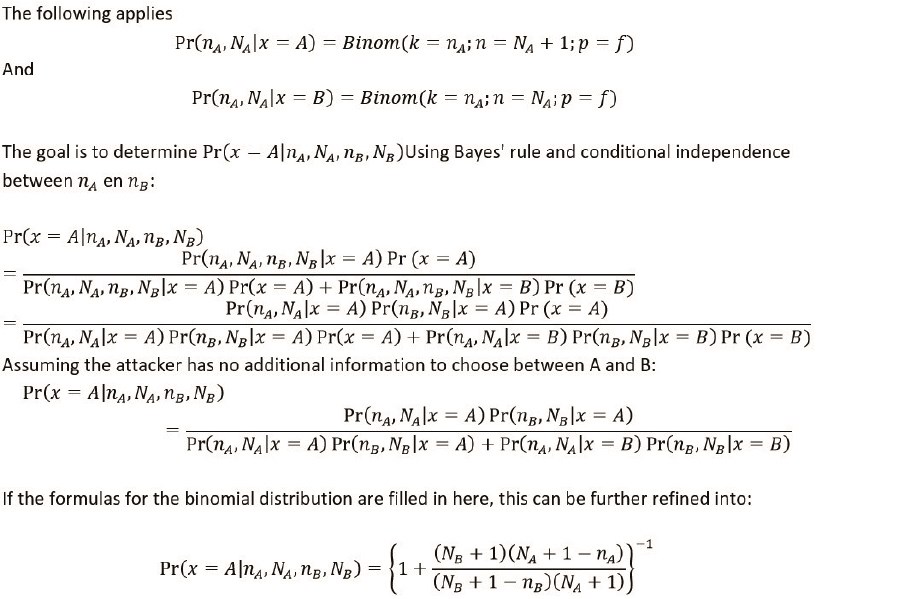

Given the counts per area, , nA en nB, and the known numbers per area, NA en NB, what is the probability that device i is in area A and what is the probability that the device is in area B. The latter automatically follows from the former because the device is either in A or in B.

Figure 5 shows for different values of nA and nB the probability that i is in A zit. NA and NB are chosen a factor 1/0.8 higher than nA and nB, the fraction f is equal to 0.8. The vertical dotted line indicates the boundary under which the digits of a region are suppressed. The horizontal dotted line indicates a probability of 0.1: below this line we know with 90% certainty that i is in region B. For values of nA and nB, greater than 15, each region is more or less equally probable, so the data in the flow cube gives hardly any additional information about the location of the device. indicates the boundary below which the numbers of a area are suppressed.